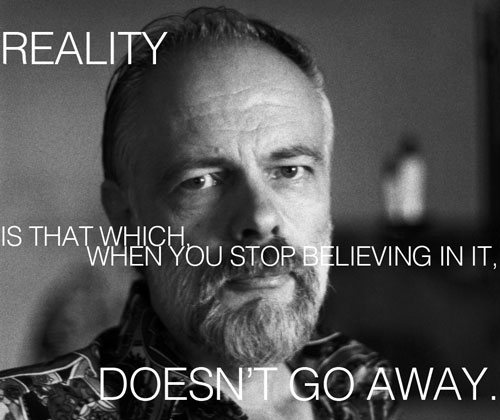

Phillip K. Dick (Brain Pickings, http://www.brainpickings.org/index.php/2013/09/06/how-to-build-a-universe-philip-k-dick/) Or stated another way

Phillip K. Dick (Brain Pickings, http://www.brainpickings.org/index.php/2013/09/06/how-to-build-a-universe-philip-k-dick/) Or stated another way

Reliability, is that which when you stop measuring it doesn’t go away.

Product’s fail. That is a reality. It is messy, confusing and not always obvious why. Product fail. They cease to function, they are the wrong color, they are too expensive, they were a mistaken purchased, or they degrade, crack, discolor, or fracture.

There are more ways products can failure than customers. Describing the arrival rate of failure, whether making a prediction or describing the data gathered from field returns is important. We need to understand and in some cases react to the failures. Even more importantly to a change in the rate of failures. We also want to know if there is a chance the product failures will exceed the expected number or not (warranty expenses) or if the estimates are correct concerning field failures. In maintenance situation, we want to predict needed spares and downtime. When we talk about failure clearly, especially the rate of failure over time, we make good decisions.

Measurement systems Our tracking methods matter.

For example, years ago I ran across a group that tracked the arrivals of failures back on a weekly basis. They realized the return rate increased when shipments increased and declined as shipment declined. They decided to normalize the returns by calculating a percentage which should be comparable over time and take into account the changes in shipments. The graph plotted on a weekly bases the returns logged weekly divided by that weeks product shipments. The returns did not come from the units shipped within one week, the returns were from previous weeks, up to a year prior, shipments. The percentage provided a meaningless value as the return count really had nothing to do with the current shipments. Another team also counted failures per week and included a Pareto of failures modes with the presentation. The report was for a product screening step implemented to identify possible early life failures or factory mistakes. They would receive 45 units in a week for screening and maybe find 5 as failures. This ratio of failure over the number tested does make sense. A little investigation revealed the 45 units did not all come from new production, as a few were refurbished field returns and considered ready for shipment, they also had a few units that previous failed the screen, received repairs and were ready for retesting. When I asked for the ratio of failures by the three streams, that seemed difficult to accomplish.

Summary In both cases,

keep track of the time to failure was not difficult, yet the time to failure information was not presented. By setting failure rate goal, the reporting structure tends to track failure rates. By not defining the duration or expectation of possible changes to failure rates, the reported rates may not include a duration. Setting a goal and not measuring it makes the goal hallow. Making measurements or tracking results without having a goal is inefficient. Knowing when to make changes or address issues is just one decision based on the combination of goals and metrics. For reliability this is likewise true. Setting clear goals, and gathering and understanding the data clearly let’s us make the right decisions. How do you gather and track reliability information? Is it working or misleading?