Math, Statistics, and Engineering

Math, Statistics, and Engineering

In college, Mechanics was a required class from the civil engineering department. This included differential equation.

Luckily for me, I also enjoyed a required course called analytical mechanics for my physics degree. This included using Lagrange and Hamiltonian equations to derived a wide range of formulas to solve mechanisms problems.

In the civil engineering course, the professor did the derivation as the course lectures, then expected us to use the right formula to solve a problem. He even gave us a ‘cheat sheet’ with an assortment of derived equations. We just had to identify which equation to use for a particular problem and ‘plug-and-chug’ or just work out the math. It was boring.

Learning to Understand

Meanwhile, in the physics course, we learned how the math tools of differential calculus worked to help us solve a wide array of problems. This included all the equations worked out in the civil engineering course and more. The difference was being able to make just the right tool vs just picking one up on occasion.

We learned the math and the understanding of how to use it well in the physics course. The engineering course expected us to master addition, subtraction, multiplication, and division, which was about the most difficult math in the course. Or not all that taxing at all.

Reliability and Math

Reliability engineering uses math. Sorry if this new to you, yet calculating a simple average (aka MTBF) is a simplification of just one of the many formulas available for our use.

We use math to provide summaries, reports, tracking, and when possible to reveal insights. We should use math to consider:

- diffusion rates,

- cooling rates,

- relations between equipment settings and wear rates,

- between process stability and field failure rates.

We have data all about us, and we are the engineers expected to make sense of it.

You may not have use calculus lately to derive a cumulative distribution function based on your data described by a novel histogram shape. You may not have employed differential equations to solve a thermal transfer problem. You certainly should understand the equations you (or your computer) are using, though.

Statistics is Just Math

A lot of what we deal with is variability. Holes, even cut by the same drill bit on the same material and machine, differ in diameter. You already know this, everything varies. Our job is to understand this variability and how it impact the reliable performance of the system.

Statistics is the language of variability. It is just math. Basic math mostly, that includes addition, subtraction, etc. Along with a few fancier tools like summation and product. We on occasion may need calculus. We rely on regression analysis to fit a distribution to the data. Statistics is a collection of math tools.

Do The Math

We’re engineers or managers interested in reliability. We have data or should find it as it is there for the analysis. We have been exposed to a broad range of math tools. You may not have realized the value of calculus or statistics during school. If you were lucky to have a professor that helped (forced) you understand the way math work to help you solve problems. Really understand the math. Then you may already be doing the math.

If not. If you are groping to find the simplest formula to get a basic analysis done. To create a summary, maybe even, alas, just the MTBF calculation, because the ‘more difficult’ math has been just out of reach for you. Then it’s time to open the books again. If you sold your college textbooks on math and statistics – get new ones. Learn the math and solve real world problems you have in front of you every day.

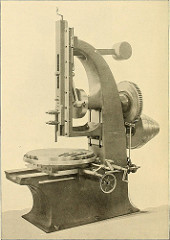

Recently, I’ve been buying and reading older textbooks and reference works on quality and reliability engineering. What has struck me is the amount of math in the older works. The newer text includes more pictures to adorn the pages without any practical value. As engineers are we losing our ability to enjoy using math tools?

How does math and statistics play into your day? What was the last formula you used and why did you select that particular formula? Do you understand the math behind the formula including the assumptions required to use it? Let us know how understanding the math has made a difference in your work.

These days thanks to Microsoft Excel all the calculations are done for us. We just need to know what calculations have to be done, set up the formulas and just plugin the numbers – the calculations are done, the charts are built. We just have to interpret the results, make conclusions and report the results of the analysis. It is simple. :)

Very true Michael, and with Weibull++, JMP, R, and other packages much of actual calculating is done for us quickly and out of -use and mis-understanding of the formulas chosen. The lack of understanding of the basics of the math, not the calculations, involved is troublesome.

For example, could you explain why the control limits are roughly plus/minus three standard deviations from the mean on a X-bar chart? Why not 2? And, how important is the assumption of normality to the interpretation of the control chart?

Not to put you on the spot, yet using Excel does not mean one understands the math.

Cheers,

Fred

Fred, I am 100% agree that using Excel does not mean understanding the math in terms of why we use three standard deviations, but not two and so on. The important part here is to know that we have to use 3*STD in the process control world (the curious one will easily find it out with our “friend” Google) The same thing is like one does not have to know how the combustion engine works in order to drive the car, but at least one has to know to put gas in it or pretty soon (if not already) to charge the battery.

There is a joke among mathematicians: “It evident that “B” follows from “A” skipping at this point several pages of math actions proving this statement. In math, to prove a new theorem we refer to several one’s that have been already proven in the past as to a given. It is good to know all the “Why’s” but it is not necessarily to use all the tools based on them.

Hi Michael,

We do rely on the work of those before us to enjoy the ability to solve complex problems relatively quickly. We do not have to derive everything from scratch, I agree.

It’s the use the tools without fully understanding them that I have a problem with. To use the tools well, we do need to understand the math, logic, and principles behind them. It’s the proper use and using math well that takes a bit more than looking up a formula via Google.

We have the tools today, like Excel or Mathematica, to employ formulas quickly – we can do so and find good or poor answers, quickly.

I once had an argument with a student about an answer they provide on a quiz. The postulated that since their calculator provided the answer it was right. That was the sum total of their argument. The missed a negative sign and the result they believed was true amounted to a negative mass for an object. Despite the logic of working on a problem to estimate the mass of an object, and getting a negative value, they insisted they were right. It’s that kind of problem I have with math understanding – the tools, like Excell, just make getting a number easier, and allows us to skip the understanding a little too easily.

Cheers,

Fred

Fred,

Cannot disagree. Math became a science that is applied to other sciences. In order to correctly interpret the result of calculations, one has to have knowledge and understanding of the subject (object) it is applied to. At our current level on knowledge of nature we do not know anything about existence of negative mass, maybe it will be discovered in the future, but in the way of correct calculations first. The same way as Paul Dirac predicted the positron, when calculating the charge of the electron. As we know, he was solving a square root and got plus and minus results. At this time it was known that electron is carrying a negative charge. Trying to make sense of the result of the calculations, he came to the conclusion of positron existence. Years later positron was discovered in the experiment. But of course, it takes to do the calculations correctly and to be a genius. :) In our every day life, when we use math as a tool, we have to know and understand the subject (object) we are working on in order to make the right conclusions.

The negative mass is still not discovered as of today :)

Interestingly, when I worked for a jet engine design company, we did not use 3 sigma control limits on our control chart for engine margin. We used much closer to 2. The rationale was that we were willing to eat the cost of a few more false positives, in order to ensure the engine had enough margin that it would not be needing to come back for service too soon.

Hi Merrill, SPC is just a tool to identify potential issues to address. It can be adjusted to accommodate a wide range of policies and practices. Although the way you describe it, are you talking about specifications or control limits? cheers, Fred

Depending on your assignments, research work or some work related studies, you need math, and I mean differential equations to come out with some models, like maintenance costs, schedule time or a PM model involving Markov process due to changing variables or states with respect to others. And thanks to Excel solver, some of these complex equations can be solved in an easy fashion for any given variable.

Thanks for the note Tugbe – having tools like Excel Solver is great, when used with understanding. cheers, Fred

Fred,

Software does not provide functionality to every practical situation and furthermore not understand what’s behind the scenes (the mathematics) of these software limit your ability to provide a sound interpretation of results for better decisions

I completely agree with Fred’s views on this and am surprised there’s any argument to the contrary. Finite element software is a good example of the pitfalls that can arise with not knowing the underlying theory and just using software as a black box. These days one can take a 3-D model, mesh it, and solve for the stresses and strains. If one doesn’t know the theory behind FEA well enough to understand how element selection can affect errors in, for example, discretization, how can the FEA analyst be confident in his results? Or if one gets some stress at a location in the model, decides to refine the mesh for more accurate numbers, and subsequently returns a higher stress, how does that person know if he’s simply approaching infinity because of a singularity or obtaining a real result? It’s the old adage of garbage in, garbage out. I usually don’t like applying a reliability formula unless I can derive it from first principles.

Thanks for the note Felix, and good example. While I probably could not derive the Weibell PDF from first principles, I’ve a pretty good grasp of regression analysis and how to use a life distribution to summarize life data. Cheers,

Fred

Ah. Well, what I really meant to say is I like to know and understand *how* equations are derived from first principles. I wouldn’t be able to derive the Weibull distribution on the spot either, but I do have his original paper where he came up with his distribution, so I can see the assumptions that went into it. Along those lines, I’ve also gone through the proof of the theorem that gives the normal distribution as the only function that makes the arithmetic mean its most probable value. Along this way you also discover why the sum of squares and rms expressions, rather than, say, simply the absolute deviation, are used so predominantly in physics and mathematics. The insights gained by examining the mathematics behind reliability and quality formulas are indispensable, in my opinion.

Hi Felix,

Same here, like you I try to track down and study the original papers. I don’t always get it right, and often have to step back and do the research and refresh my understanding. It does take time, and so many are so short of time. Yet, applying a bit of understanding upfront, often saves considerable time later.

Cheers,

Fred

Fred, I have 2 questions:

– what text books you could suggest?

– I am learning R now, do you know anyone using R for reliability?

Thanks.

Hi Yi,

Most practical, not theoretical, textbooks are very useful to identify approaches and steps to solving a problem. Designing an accelerated life test or determining sample size for a lot test, for example.

I find using a book like Practical Reliability Engineering by O’Connor and Kleyner is highly recommended. And, then follow up your understanding of the math and background concerning math by reading the references, especially the technical papers on the subject.

I find the textbooks that dive deep into the theory difficult to use in a practical sense, yet do provide a good understanding of the topic. For accelerated life testing, I highly recommend Wayne Nelson’s book Accelerated Testing. It is a nice treatment of how to design and solve ALT testing problems and it includes the necessary assumptions that have to be checked for each approach/formula.

On the other hand, Jerry Lawless’s book Statistical Models and Methods for Lifetime Data is much more on the theory side. While a really good book, I find it difficult to find and use the information I need to design and analyze an ALT.

For both books, I regularly track down and read the cited references to help gain a complete picture of the formulas and how to use them well.

One thought that maybe we can do on the Accendo Reliability site is explore the background, derivation, and assumptions around the common formulas found in our field.

Appreciate your inputs, I think that’s good idea.

One of the points I have tried to make over and over with my engineering colleagues is to make sure they plot the data and understand what the data (and its distribution) is trying to tell them. Some engineers are so anxious to average away all of the beautiful degrees of freedom that are begging to tell the story, but the data gets silenced when the only information to escape the black hole of ignorance is the single degree of freedom – the mean.

Well said Merrill. cheers, Fred